ReframeX our AI video repurposing platform, is built on the same computer vision and media processing infrastructure that powers Videograph’s enterprise video solutions. In this technical deep-dive, we explain how five years of AI video innovation became the technology engine behind ReframeX’s smart crop, auto captions, and intelligent clip generation.

The AI Foundation — Five Years of Broadcast-Scale Computer Vision

Videograph’s AI platform was originally designed to solve challenges that broadcasters and OTT platforms face daily: how to process, analyze, and optimize massive volumes of video content in real time. Over five years, we built specialized AI models for scene detection, facial recognition, motion tracking, speech analysis, and content classification.

These models were trained on hundreds of thousands of hours of diverse video content — live news broadcasts, sports events, entertainment programming, podcast recordings, and corporate presentations. This training diversity is what makes Videograph’s AI exceptionally accurate across content types. When we built ReframeX, we did not start from scratch — we adapted these battle-tested models for the specific needs of short-form content creation.

Smart Crop — From Broadcast Monitoring to Intelligent Reframing

In Videograph’s enterprise platform, our subject-tracking AI monitors live broadcasts to detect faces, track speakers, and analyze visual composition in real time. News networks use this technology to automatically generate close-up crops of speakers during debates, interviews, and panel discussions.

ReframeX’s Smart Crop, we adapted this same subject-tracking engine for a different purpose: converting landscape 16:9 video into portrait 9:16 format for TikTok, Instagram Reels, and YouTube Shorts. The AI model is identical — it detects faces, tracks motion, and follows subjects frame by frame. The output format changed from broadcast monitoring dashboards to mobile-ready vertical clips.

The accuracy of Smart Crop directly inherits from years of broadcast deployment. Our subject tracking achieves 99.2 percent accuracy because it was trained on the most demanding content type — live news with rapid camera movements, multiple on-screen personalities, and unpredictable visual compositions. If the AI can track a football player sprinting across a field during a live broadcast, it can certainly follow a podcaster sitting at a desk.

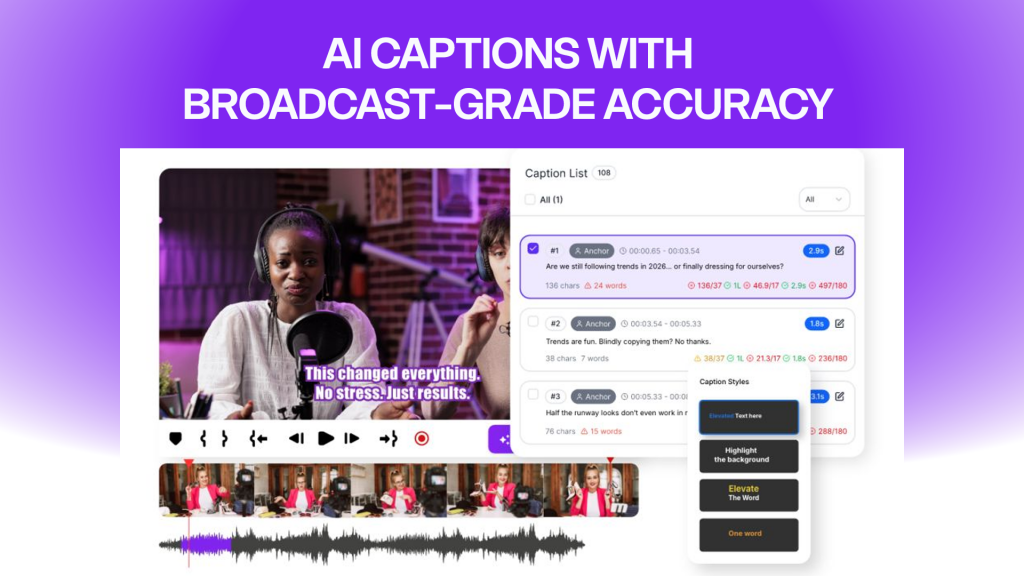

AI Caption Generator — From OTT Subtitling to Creator Captions

Videograph’s speech-to-text engine was built to serve OTT platforms that distribute content across dozens of markets and languages. Our enterprise clients require accurate transcription and translation in over 160 languages, with support for regional accents, colloquial speech, and technical terminology. The system processes audio at broadcast scale — thousands of hours per day with 98 percent or higher accuracy.

ReframeX’s AI Caption Generator uses this same multilingual engine. When a creator uploads a video and clicks Generate Captions, the request is processed by the identical speech-to-text infrastructure that subtitles content for major OTT platforms. The difference is the output format — instead of broadcast-standard SRT files, ReframeX delivers animated, styled captions with word-by-word highlighting optimized for social media engagement.

This shared infrastructure is why ReframeX supports 160+ languages from day one. We did not integrate a third-party transcription API — we deployed our own enterprise-grade engine, giving creators broadcast-quality accuracy at consumer pricing.

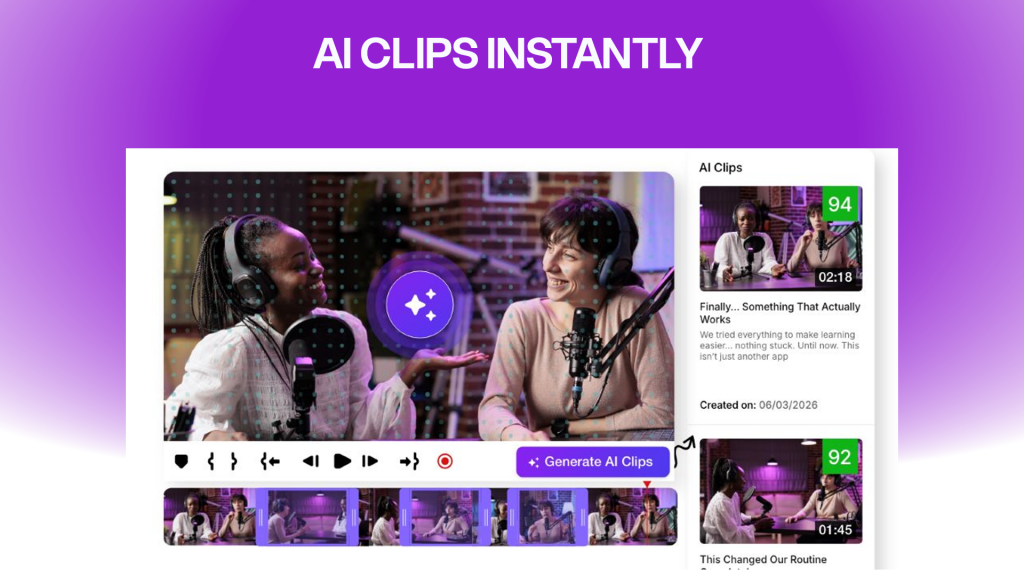

AI Clip Generator — From Broadcast Highlights to Viral Moments

Videograph’s broadcast clients have long relied on our AI to automatically detect highlight-worthy moments during live events. Sports teams and leagues use our scene detection and emotion recognition models to generate real-time highlight reels during matches. News broadcasters use the same technology to extract breaking news clips and soundbites.

ReframeX’s AI Clip Generator brings this highlight detection technology to every creator. Upload a long-form video — a podcast, webinar, interview, or YouTube video — and the AI identifies the most engaging moments using the same multi-signal analysis our broadcast clients rely on: speech energy, emotional peaks, visual action, and scene changes. Each suggested clip is scored for predicted engagement, giving creators a data-driven starting point for their short-form content strategy.

Split Screen — From Multi-Camera Broadcast to Vertical Interviews

Broadcast workflows frequently involve multi-camera productions where directors switch between speakers, wide shots, and close-ups. Videograph’s AI identifies individual speakers in multi-camera feeds and tracks them across camera angles.

ReframeX’s Split Screen adapts this multi-speaker detection for vertical content. When a creator uploads a landscape podcast or interview with two speakers, the AI automatically identifies both participants and creates a stacked vertical layout — one speaker on top, one on bottom. This produces native-looking 9:16 interview clips without manual editing.

What This Means for Creators and Teams

When you use ReframeX, you are not using a startup’s MVP — you are accessing AI that has been refined through five years of enterprise deployment, processing millions of hours of content for some of the world’s most demanding video organizations. The accuracy, speed, and reliability you experience are direct results of this broadcast heritage.

This is what separates ReframeX from tools built on generic third-party AI APIs. Our technology is proprietary, trained on diverse real-world content, and optimized for the specific challenges of video repurposing.

Try ReframeX free and experience broadcast-grade AI in a creator-friendly platform. Built by Videograph. Powered by five years of innovation. Designed for everyone.

— The Videograph Engineering Team (a YuppTV company)